The EU AI Act: Shaping the Future of AI Regulation and Industry Response

“We will be hiring lawyers while the rest of the world is hiring coders.” Cecilia Bonefeld-Dahl, director-general for DigitalEurope

In a bold move to position itself as the "global hub for trustworthy AI," the European Union has introduced the AI Act, a comprehensive piece of legislation aimed at regulating artificial intelligence. This groundbreaking law not only sets a new standard for AI governance but has also prompted swift responses from tech giants worldwide. Let's delve into the key aspects of this regulation and its potential impact on the AI landscape.

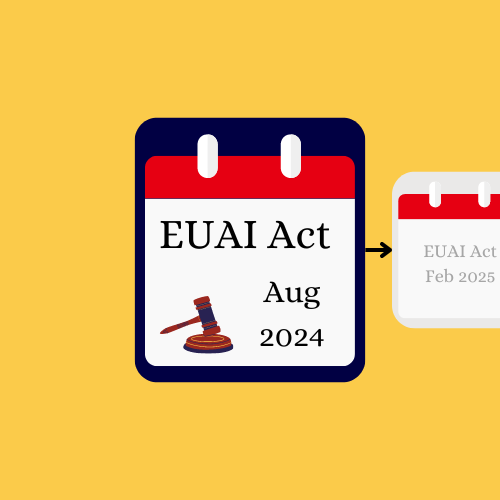

The EU AI Act: A Timeline of Implementation

The EU AI Act introduces a phased approach to regulation enforcement:

August 2024: The AI Act officially enters into force, setting the stage for a series of prohibitions and obligations.

February 2025: Prohibitions on "unacceptable risk" AI systems take effect. This includes AI designed to manipulate or deceive people into changing their behaviour or systems that evaluate people through "social scoring."

August 2025: Obligations begin for providers of "general purpose AI" models, which power tools like ChatGPT or Google Gemini.

August 2026: Rules apply to "high risk" AI systems, encompassing areas such as biometrics, critical infrastructure, education, and employment.

A Risk-Based Approach to AI Regulation

The AI Act classifies AI systems into four risk categories, each with its own regulatory requirements:

Minimal Risk: Systems like AI-enabled video games or spam filters fall under this category and remain unregulated.

Limited Risk: This includes chatbots and systems generating text and images. They face "light regulation," such as transparency obligations to inform users they're interacting with AI.

High Risk: Systems used in law enforcement, biometric identification, emotion recognition, or critical infrastructure face the most stringent regulations.

Unacceptable Risk: These prohibited systems include AI that could deceive or manipulate human behaviour, evaluate people based on social behaviour or personal traits, or profile individuals as potential criminals.

The Intent and Concerns Surrounding the AI Act

EU officials assert that this legislation aims to foster technological growth by establishing clear rules. The regulations stem from concerns about the safety and security of EU citizens, potential job losses, and the risk of public mistrust hindering AI development in Europe.

However, the Act has faced criticism. Kai Zenner, a policy advisor, notes that the law is "rather vague." Critics argue that the broad approach may result in poorly conceived regulations that could hinder Europe's competitiveness in producing future AI companies.

Cecilia Bonefeld-Dahl, director-general for DigitalEurope, expresses concern about the additional compliance costs for EU companies: "We will be hiring lawyers while the rest of the world is hiring coders."

Tech Giants' Proactive Measures

While the debate continues in Europe, major tech companies are taking proactive steps to address AI content labelling and transparency:

Meta's Approach

Starting May 2024, Facebook, Instagram, and Threads will begin labelling AI-generated content. Meta plans to:

Add "AI info" labels to a wide range of video, audio, and image content.

Implement more prominent labels for content that could potentially deceive the public on important matters.

Use visible markers on images and invisible watermarks and metadata within image files.

Develop tools to identify invisible markers at scale, aligning with industry standards like C2PA and IPTC.

Meta will also continue its fact-checking efforts for AI-generated content.

YouTube's New Policies

YouTube is introducing new disclosures and labels for AI-generated content:

More prominent labels for videos on sensitive topics like health, news, elections, or finance.

Penalties for creators who repeatedly fail to disclose meaningfully altered or synthetically generated content.

YouTube has provided examples to help creators understand what types of content require disclosure, from minor edits like beauty filters to more significant alterations like digitally replacing faces in videos.

TikTok's Initiatives

TikTok's new policy requires:

Labels for AI-generated content containing realistic images, audio, or video.

Testing of an automatic "AI-generated" label for content detected as AI-edited or created.

The Road Ahead

As the EU forges ahead with its ambitious AI regulation, the tech industry is already adapting to new transparency requirements. The coming years will be crucial in determining whether these regulations will indeed foster trust and innovation or if they will pose challenges to AI development and competitiveness.

For marketers, content creators, and businesses leveraging AI, staying informed and compliant with these evolving regulations will be essential. As the landscape continues to shift, adaptability and transparency will be key to navigating the new era of AI governance.

What are your thoughts on these developments? Will the EU AI Act set a global standard for AI regulation, or will it create hurdles for innovation? Share your perspectives in the comments below.